Engineering Managers are going to hate OpenClaw

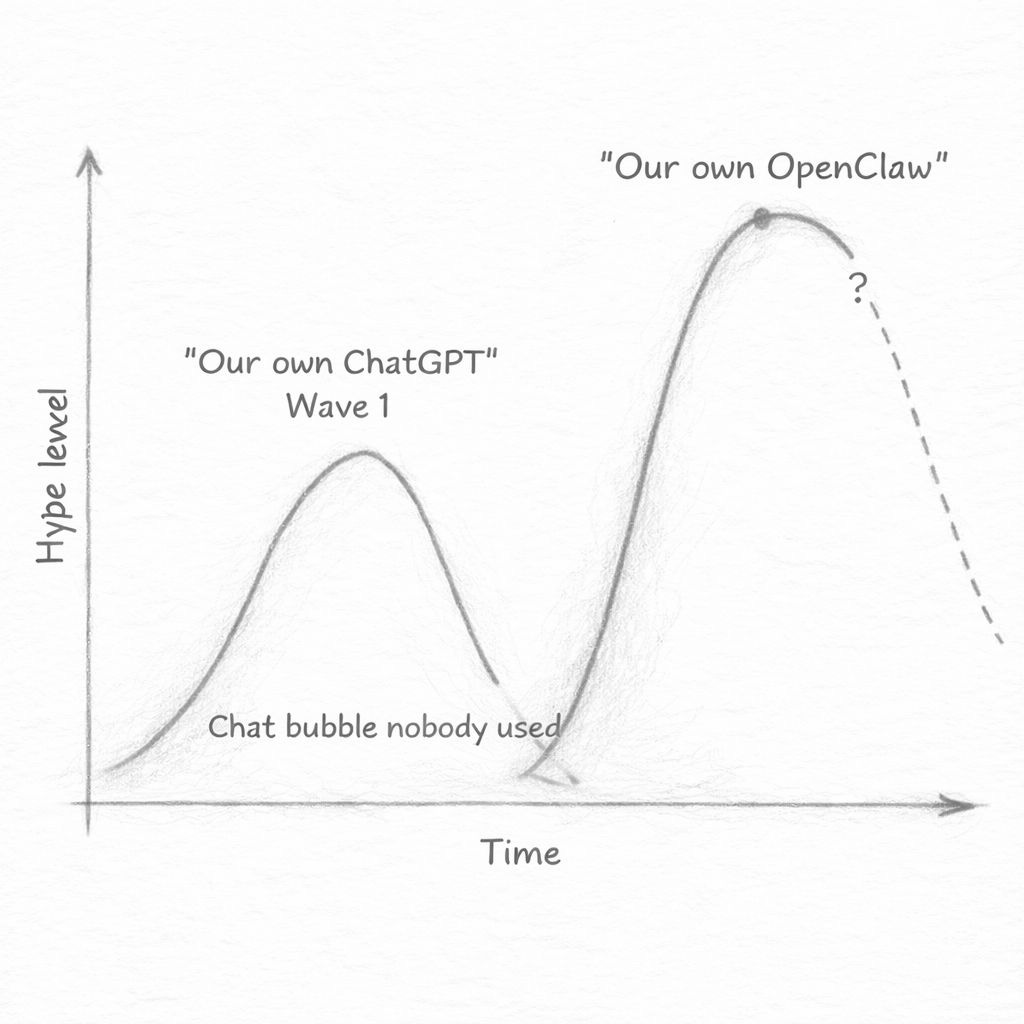

A ChatGPT-like wave of hype is about to hit us, and you should be afraid

Back in Q3 of 2023 my company’s CPO stopped the roadmap and told us to build an LLM-based feature. It was right at the peak of the ‘chatbots’ hype, where every company wanted its own version of ChatGPT.

I really hate decisions based on hype, so I asked: “What if we have no adoption at all in phase 1? How do we decide whether to continue?” (phase 1 was around a month, the full vision was 3-4)

He (at least honestly) replied: “We’re continuing with all future phases no matter what. It’s a strategic initiative.” In other words - the board wanted an AI feature → we are going to build an AI feature.

In 90% of the products, nobody asked for those features nor wanted them. Nobody opened their expense tool thinking “I wish I could have a conversation with this.” They wanted to submit an expense.

There were many famous disasters - like Chevrolet bot selling a car for 1$, a supermarket’s bot suggesting poisonous recipes, and snapshot seeing huge spike in 1-star reviews after adding the ‘My AI’ feature.

But of course, our teams still had to build the features and deal with the mess. We learned about prompt engineering best practices, and brought something to production fast (without even proper evals in place…).

In recent weeks I started to get a feeling we are reaching a similar wave of hype with OpenClaw, and it looks scary.

What Is OpenClaw

In case you missed it - OpenClaw is an open source GitHub repo that exploded in the last few months, becoming the 8th most-starred project with 350k stars (passing React), and will probably become #1 soon.

It was built by Peter Steinberger, an Austrian developer who connected three things: a messaging app, an LLM model, and a terminal. He assumed Google or OpenAI would build the same thing within weeks, but they didn’t. His “small toy” became the fastest-growing open-source project in GitHub history.

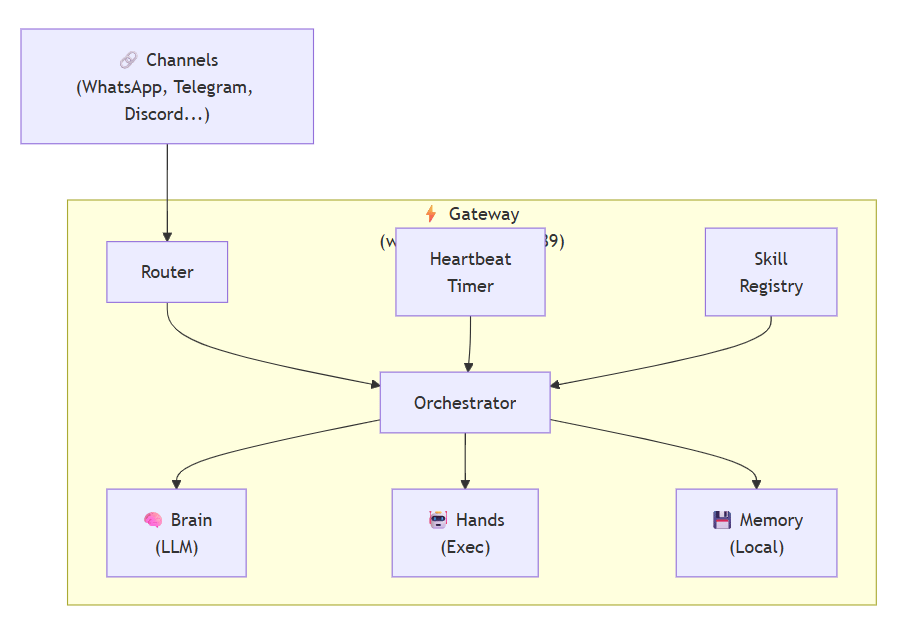

The concept is actually very simple:

You have a single long-running Node.js process (the “Gateway”), which orchestrates everything. It is able to use any LLM (the ‘brain’), and also execute commands on your computer (the ‘hands’).

So far, this is not very different from a Claude Code experience. The difference comes from the other 3 things:

Memory - it writes plain Markdown files in your file system, and remembers quite well everything you need it to.

Channels - this is what caused it to go viral. You can talk to it in whatever chat platform you want (Slack, iMessages, Whatsapp, Telegram), making it much more accessible and addicting.

Heartbeat - and this is the biggest ‘innovation’. Every 30 minutes, the agent triggers and checks if there is anything that needs to be done, and can proactively send you messages. It can check your Gmail, monitor your deployments, summarize new Slack messages and so on.

The 3rd part, the heartbeat, is where most of the hype is coming from.

Reactive vs proactive

Let’s imagine you are an engineer working on a tool for accountants, like QuickBooks. In the first wave, you probably built a chatbot that lets users upload expenses and categorize them automatically (with maybe a few clarifying questions).

This is reactive - the user is the one initiation the interaction.

In the “OpenClaw wave”, your users could ideally say: “Monitor my Gmail and forever submit my expenses. Every morning send me a report for what you did the day before so I could correct you.”

The agent checks your Gmail every 30 minutes, finds receipts, categorizes them, submits them through the API, and every morning sends you a summary on WhatsApp. You review it over coffee, correct the one it miscategorized, and that’s it.

Isn’t it just automation?

Actually, yes. I like to think about it as smart automations, now accessible to everyone.

I run manager.dev as a side business. I have expenses to file, content to write, sponsors to book and negotiate with. I could have partially automated a lot of this years ago by stitching n8n or Make with LLMs. I never did, seemed like too much effort.

Now, it’s gotten MUCH easier.

In the last week, I’ve built my ‘AI employees’ in paperclip (basically a nice way to orchestrate multiple Claude Code processes), and played with a OpenClaw (deployed it on Railway, breaks quite a lot, will switch to NanoClaw in the upcoming weekend).

The tech is not there yet, but it’s very close. I can understand the excitement.

And that’s what makes this wave more dangerous than the first one. When you build an automation yourself, whether in n8n, make, or inside a SaaS you use, you are doing it in a mindful way. You think about what you want to achieve, you clearly define it (or at least arrive to a definition through trial and error), and you (usually) test it.

With prompting, you are much less careful.

How it’ll impact your product

The ‘ChatGPT’ hype will repeat itself. Your board/exec team will feel your product needs to be more ‘agentic’ and include ‘OpenClaw-like’ features to stay relevant.

And for some products, they might be right.

Take Linear’s agent for example (not sponsored this time!). As their CEO said, issue tracking is dead. In the last month, my team uses Linear almost solely through Claude Code. Imagine if sales people around the world started to use Salesforce only through OpenClaw or similar products that will pop up. Tools that will become just ‘databases’ might become obsolete, or at least replaced more easily.

And it’s not like there will be just one agent that solves all your problems, same as there won’t be a “single SaaS” to run your company. In her great article, Claire Vo shared her OpenClaw setup, using 9 different agents for different purposes (from family, to her podcast, to content).

Here are some examples of how those agents could look like:

Datadog agent. Monitors your error rate every 30 minutes. Spike detected at 3am, pages the right person, pulls the relevant logs, drafts the incident summary, and posts it to Slack.

Salesforce agent. Monitors your pipeline every 30 minutes and sends follow-up emails to prospects.

Greenhouse/Ashby agent. Checks new applications every morning, scores them against the job criteria, surfaces the top three, sends them slots to schedule automatically.

Some of those features might be actually useful, but I’m sure there will be some much bigger disasters too. A McDonald’s agent that detects what you’re craving and orders your food before you even open the app, or a Notion agent that reorganizes your workspace overnight because it decided your folder structure was too messy.

In any case, you’ll still be required to implement it.

Your part in it

We can’t let this conversation happen without us.

The “our own OpenClaw” discussion is already happening in your company, probably between your CPO and CEO, who both read some hype tweets.

If Engineering Managers aren’t in that conversation early, we’ll get a requirement that was designed without understanding the possible blast radius, the infra work, or the difference between a good agent workflow and a shitty (and dangerous) one.

That’s how the first wave went… But this time the impact might be worse than an unused feature. A chatbot that gives wrong answers is embarrassing, but an agent that acts on wrong assumptions is like a bomb.

Final words

I believe we have a big headache coming for us, but it can also be an exciting change.

You have enough on your plate, and this feels like complete bullshit hype, I know. I still highly recommend setting aside a couple of hours aside to play with OpenClaw/NanoClaw/PaperClip or any tool in this area.

Having at least some early experience on the consumer side of it can help you a lot in upcoming conversations - your PM is already thinking about this, maybe you should too.

Discover weekly

The Two Most Powerful Words in Any Negotiation. by Marc Randolph. I love Marc’s storytelling, super relevant read for any manager of people.

How Multiplayer Pomodoro Cured My Productivity Debt by Michał Poczwardowski. A peer-pressure based productivity method I really liked 🙂

OpenClaw: The complete guide to building, training, and living with your personal AI agent by Claire Vo. A really nice start for playing with it yourself.

P.S.: The publication moved to Beehiiv, but thanks Pontus for the tip to just publish here too :)

This is absolutely a huge deal. Agents that overuse tokens. Inconsistencies in process. Aberrations squared, cubed. I’m building a governance and compliance platform that keeps agents in line.

Glad you mentioned hype tweets.

To me, everything points in the direction that the main stakeholder must use every second to preach this technology. Either through product (ie ChatGPT being supportive and positive, even if you talk complete nonsense - look for Allan Brooks, a Canadian small-business owner) or through fearmongering - AI company CEOs talking about not enough regulation for "what is about to come", Project Glasswing (you can't use), etc.

They must sell this thing 0/24 and ramp up spending. Part of that marketing is the hype tweets, talks, etc. I'm like, fine, go on and sell your stuff, I'm selling mine. The problem is when other CEOs bite the hype, throw away common sense, watch a TED talk about some AI topic, and think, _yeah, we should totally do that_. And then things turn shit or just stay the same but it causes some drama that nobody needed.