Convincing people to make risky decisions is a big part of the engineering world:

Your manager wants you to fix all security alerts, but you feel it’s a waste of time.

Your PM wants to release a feature, but you think it needs more testing.

Your Chief architect wants a multi-region architecture, and you don’t agree.

Some people (like me), take that a bit too far:

(I promise we’ll get back to engineering in a moment)

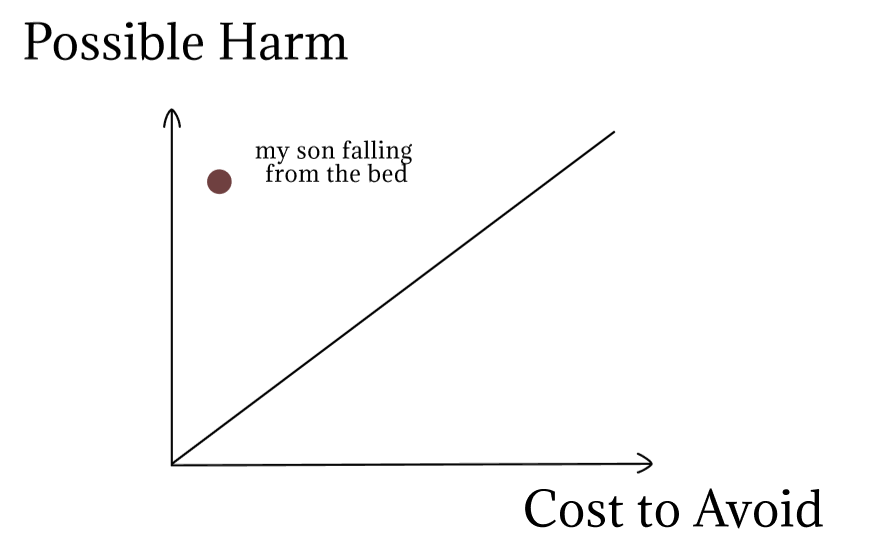

I have a 6-month-old son who doesn't crawl yet. One day, I put him in the middle of our big bed as I always do and turned around to put some clothes in the closet.

Suddenly, I heard a strong 'Boom', and a huge cry from behind me.

I turn around, and I see him lying on the floor, in shock. My heart dropped. You can't even imagine how scared I was. My first thought was that he hit his head and was going to die.

My wife previously told me to keep a close eye on him, as the first movement is always unexpected. I thought there was no way he'd just start moving without some signs, so I ignored the warnings.

Luckily, he was just in shock, and just slightly bruised, but he could have easily been seriously injured. I'm still feeling guilty for that - because I got a warning, and it would have cost me very little to keep close track of him.

You are probably less interested in my parenting mistakes, so let’s get to the point :)

Since that incident, I examined my reckless approach toward risk, to understand how I could approach it differently.

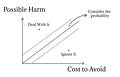

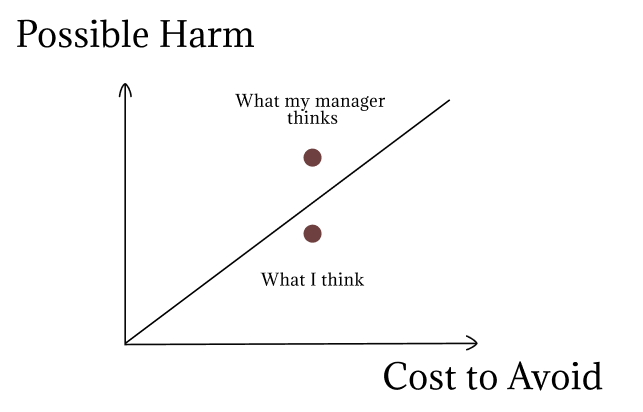

The most simple mental model I came up with:

Here is a very simplistic approach - everything above the line you should avoid, everything under the line you can ignore.

Examining tech decisions through the model

Cloud infrastructure setup

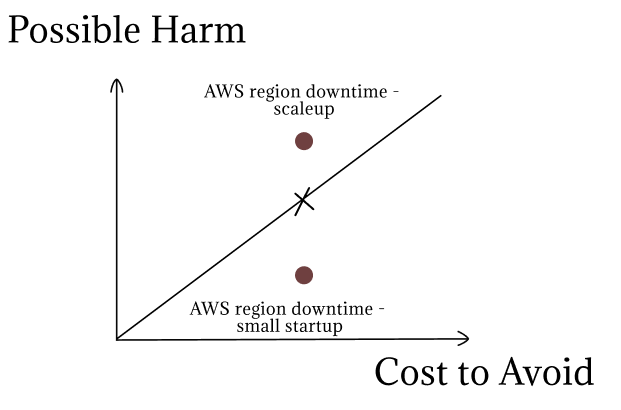

Let’s examine the single-region vs multi-region setup.

The harm of downtime is the deciding factor.

In a small startup - it’s probably not worth it. In our case, it won't be catastrophic. Even a 24-hour downtime won't kill our business. If you are a Fintech company, and responsible for payments, your answer will be completely different. A few hours of downtime might kill you, so it'll be worth the effort. Same if you are a large scale-up, right before an IPO.

Let’s take another example - a the whole cloud provider going down.

The cost to set up a multi-cloud infrastructure is very high. Still, as you grow, one day it’ll be worth the effort.

The tough part is to constantly asses your options, and understand when the balance starts to tip in favor of avoiding the risk.

wrote a great article about How much uptime you can afford. 99.999% is definitely NOT for everyone.Security

So your Github dependabot is constantly bugging you with security alerts. You have 100+ microservices, and some legacy code you inherited from another company you acquired. This means hundreds of security vulnerabilities that need to be addressed.

How would you place the risk of having a security breach on the graph?

What I think: we are an AgTech company, not a banking one. Our data is not sensitive, we are still small, and there are no good reasons for hackers to focus on us.

What my manager thinks: I’ve lived through a security incident, it’s hell. It can cost millions to resolve and might ruin the company.

So who is right?

It doesn’t really matter, he is the one deciding on our policy 😂

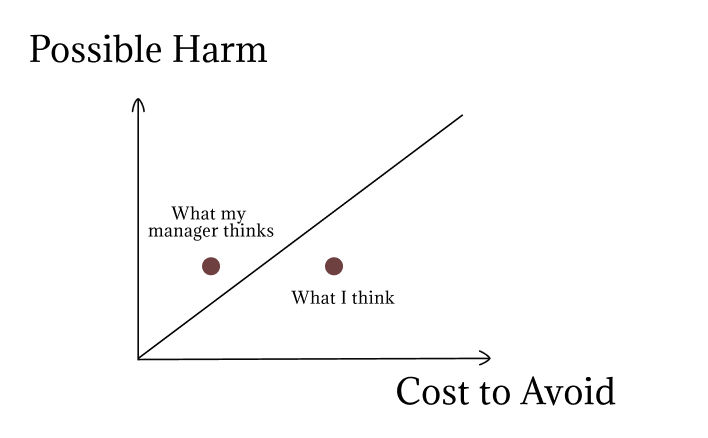

Jokes aside, the important part is to understand why we think differently. Once you understand where the difference lies, it’s much easier to talk about it.

For example, it might have looked like this:

Both of us agreeing about the harm, but my manager thinking that it was not that hard to continuously fix all those alerts. This means we need to have a completely different conversation!

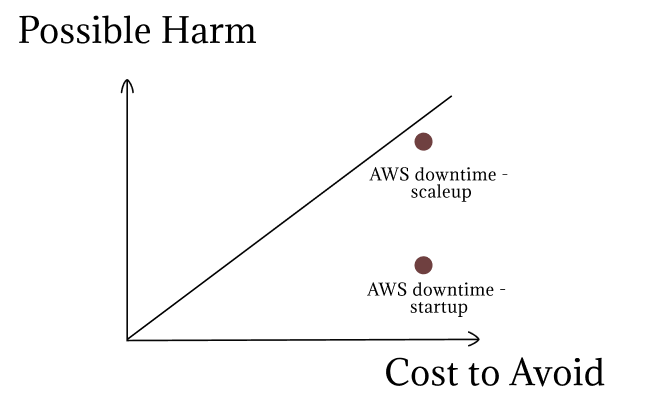

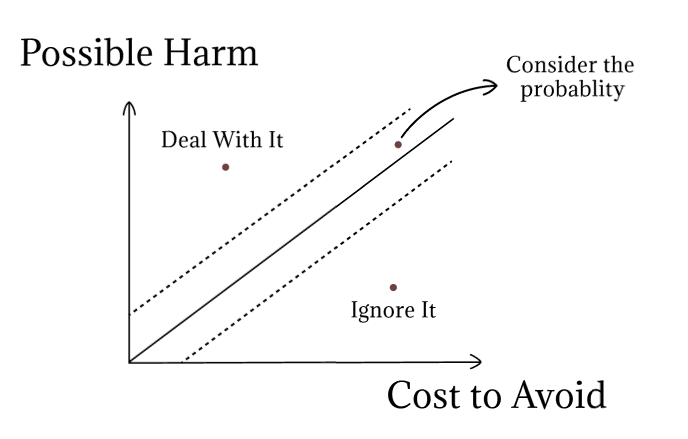

Adding probability to the mix

I confess, I cheated a bit above.

I mentioned that I thought: “There are no good reasons for hackers to focus on us”. This doesn’t relate to harm, nor to cost - this relates to the probability of the risk materializing.

In my son’s example, the probability is what caused me to behave irresponsibly. I knew the harm was high, but I assumed the risk was low.

The truth is, that we humans are terrible and judging probabilities.

So in my opinion, it should be only used as a tiebreaker.

harmful situations, that are easy to avoid, should be addressed even if the probability is low.

Do you have a fire extinguisher in your house? It will probably never be used, but you won't forgive yourself if you need it and it's not there just because you thought the risk of a fire was low.

Here is the addition to the model:

Only once you agree on the possible harm, and the cost to avoid, is it worth talking about the probability.

Finals words

Like all mental models, it never fits all cases. For example, events with minuscule probability (like an airplane crashing into your house because you live close to the airport) are a not very good fit here.

The goal when using it is NOT to agree on the exact place on the graph, but to understand why other people think differently.

I should have thought about my crawling baby situation like that: “I think that the probability is very low. The possible harm is very high, and it’s very easy to avoid, so I should avoid it anyway.”

What I enjoyed reading this week

Mastering incentives at work by

. If you are interested in mental models - is the place to go.Leadership requires personal risk by Will Larson. An interesting approach to putting your career on the line.

The secret for addictive websites (that keep users hooked) by

. Another simple but genius idea by Tom!

Good tech examples, Anton, and great use of a personal story!

Humans’ perception of probability is something that still intrigues me. By understanding it just a bit better, our decisions can be improved. With low probabilities, we too often assume that it will not happen.

Thanks for the share.

A great mental model, Anton! I think along the same lines. Sorry for your son also, congrats for crawling 😄